Sync From Azure Blob Storage

Connect a Datature Vi dataset to an Azure Blob Storage container using a service principal and a scoped role assignment. Read-only sync that keeps blobs in your Storage Account.

Datature Vi reads images and videos from an Azure Blob Storage container using a service principal that you authorise inside your Azure tenant. The service principal gets the Storage Blob Data Reader role on a single container, scoped through a condition so it cannot read anything else in the Storage Account. This guide walks through the full setup.

- A paid Datature Vi account. External bucket sync is not available on the free tier.

- An active Azure subscription with Blob Storage configured.

- Azure CLI installed locally and signed in (

az login). - A Storage Account with at least one container and the assets you want to sync.

- Permission to create service principals and assign IAM roles in your subscription.

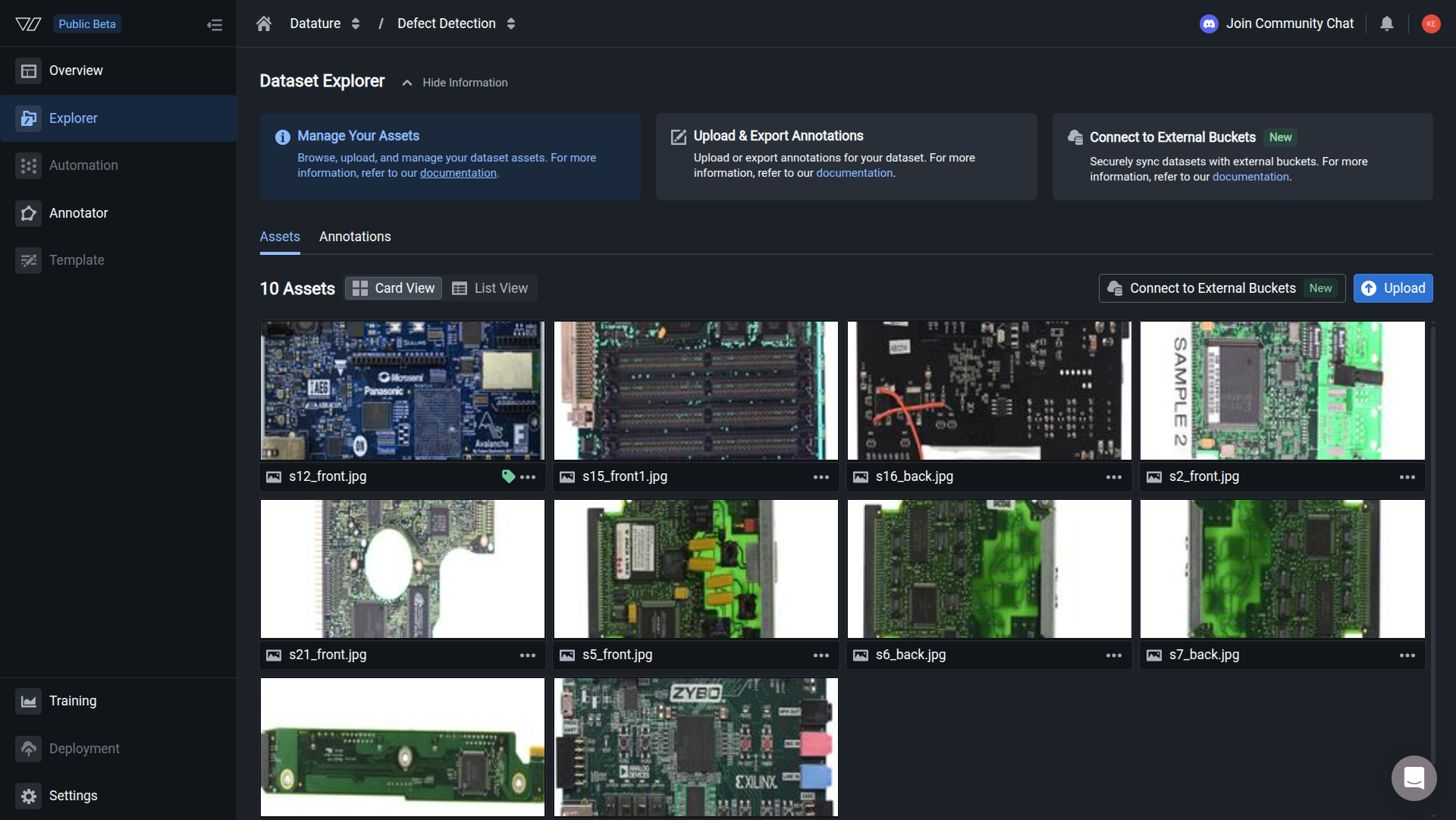

Open the Explorer tab

In the left sidebar, click the Explorer tab on your dataset. This is where the synced assets will appear after the connection is set up.

Synced images appear in the dataset Explorer. The asset count in the header reflects the blobs that passed the format checks.

Step 1: Enter the blob details

Open your dataset, then walk through the wizard.

The Blob Details tab asks for four fields:

Click Next. Vi generates a unique service principal ID and a role assignment identifier for the next step.

Step 2: Create the service principal

Vi shows you a unique application ID. Run this command in a shell where the Azure CLI is signed in, replacing the placeholder with the value Vi gave you:

This registers a service principal in your tenant for the Vi application. The command finishes with a JSON object describing the principal. You do not need to copy any of that output; the next step uses the role assignment identifier from the Vi wizard, not from this command.

Step 3: Assign the Storage Blob Data Reader role

Grant the service principal read-only access to the container. The condition below restricts the role to your specific container, so the principal cannot list or read blobs anywhere else in the Storage Account.

Open Access Control on the Storage Account

In the Azure portal, open the Storage Account, then click Access Control (IAM).

Assign the role on the Storage Account, not on the container or an individual blob. The condition scopes access to a single container; assigning the role at the container level breaks the listing call.

Add a role assignment

Click Add, then Add role assignment. Search for and select Storage Blob Data Reader.

Set the member

Switch to the Members tab. Paste the IAM Role Assignment identifier that the Vi wizard generated.

Add the container condition

Switch to the Conditions tab and add a condition. Paste the expression below, replacing <YOUR_CONTAINER_NAME> with the container you entered in Step 1.

(

(

!(ActionMatches{'Microsoft.Storage/storageAccounts/blobServices/containers/blobs/read'})

)

OR

(

@Resource[Microsoft.Storage/storageAccounts/blobServices/containers:name] StringEquals '<YOUR_CONTAINER_NAME>'

)

)Review and assign

Click Review + assign twice to apply the role.

The role must be assigned at the Storage Account level with the container condition above. If you assign it directly on the container or on an individual blob, the listing API call fails and Vi reports a connection error.

Step 4: Configure CORS (videos only)

You only need this step if your container holds video assets. Image previews work without CORS.

Open Resource sharing (CORS)

In the Storage Account, click Settings, then Resource sharing (CORS).

Add the Vi origin

Add a CORS rule under the Blob service tab using GET as the allowed method.

Save

Click Save. The change takes effect immediately.

Step 5: Confirm the connection

Back in the Vi wizard, click Next to test the connection. Azure can take up to five minutes to propagate the role assignment, so a connection failure right after Step 3 does not always mean something is wrong.

If the test fails, wait a few minutes and click Retry. If the failure persists, jump to the troubleshooting section.

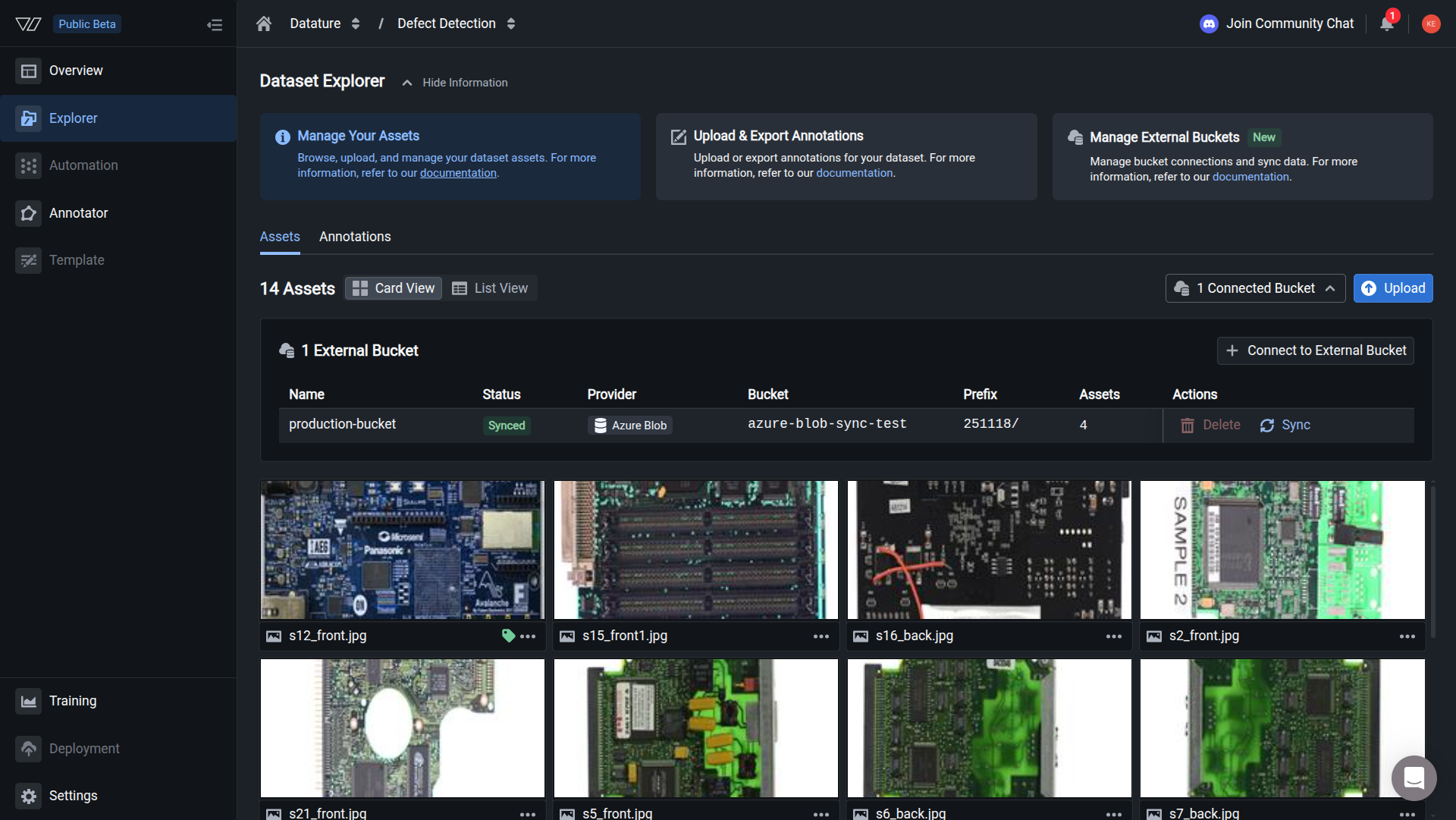

Step 6: Sync your assets

Choose Sync Now to start the first sync immediately, or Sync Later to set up the connection without syncing. If you pick Sync Now, Vi walks through three more screens before the sync starts in earnest.

- Preview Files to Sync. Vi scans the container prefix and shows the file count alongside a sample of blob paths. Confirm the preview matches what you expect, then click Sync.

- Sync Started. A confirmation appears letting you know the job is running in the background. Click I Understand to dismiss the dialog; the sync continues even after you close the wizard or the browser tab.

- Track progress. Open the Connected Bucket dropdown in the top-right of the Explorer to see the connection name, status, provider, container, prefix, asset count, and a live progress bar while blobs are retrieved.

The first sync takes 5 to 40 minutes depending on the container size.

Asset requirements

Blobs in the container must meet the same format rules as direct uploads.

Annotations are not part of the bucket sync. Vi reads only image and video metadata from the Azure container. If you have existing labels in COCO, YOLO, Pascal VOC, CSV, or Vi JSONL, upload them directly to Vi once the assets finish syncing.

Troubleshooting

Next steps

Updated about 1 hour ago